-

Solutions

SolutionsBack

- Overview

- Application Infrastructure

- Cloud Infrastructure for Applications

- Hybrid Cloud Infrastructure

- Hybrid Cloud With VMware

- Infrastructure as a Service

- Overview

- Data Protection & Cyber Resiliency

- Intelligent DataOps

- Cloud Data Management

- Data Lakes and Data Warehouses

- Data Integration

- AI-Driven Solutions

- Overview

- Application Reliability Centers

- Cloud Modernization

- Application Engineering

- ERP Modernization for SAP

- ERP Modernization for Oracle

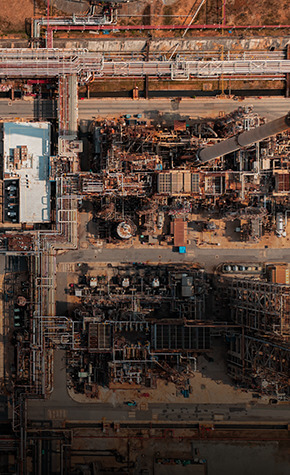

BackMaking Sustainability Achievable with Data.Intelligent digital infrastructure and data platforms to reduce data center energy consumption and carbon footprint. Expertise to help you uncover insights to enable data-driven sustainability.

Explore Sustainable SolutionsRELATED LINKSBackYour Cloud, Your Way.Navigating the private, hybrid, and multicloud landscape can be complex. Ensure your success with an integrated approach to modernizing core infrastructure, apps, and data to achieve your objectives.

See Modernize the Digital CoreBackYour Infrastructure, Your Way.Adapt to the needs of future workloads with a modern edge-to-core-to-cloud infrastructure that delivers efficiency, agility, and resilience.

Explore Modern InfrastructureBackModernize and Optimize Resources for Availability, Agility, and Performance.Support the evolving demands of today’s enterprise workloads with modern data infrastructure.

Explore App InfrastructureRELATED LINKSBackGet More Value from Data than Ever with Cloud Object Storage for S3 AppsDeliver services through a centralized, infinitely scalable platform, providing native S3 integration, faster data access, and reduced TCO across hybrid and multicloud architectures.

See Cloud App InfrastructureBackHybrid IT and Cloud Ops Solutions for the Evolving, Data-Driven Enterprise.Manage IT service levels, not infrastructure, with a central operating model across data centers, the edge, and public clouds.

Hybrid Cloud InfrastructureRELATED LINKSBackYour VMware, Only Better.Experience VMware with greater simplicity, resilience, and agility. Your workloads, at the right performance, and the right cost. It's all in the Power of +.

Visit Hybrid Cloud with VMwareRELATED LINKSBackEverFlex XaaS: Your Infrastructure, Only Better.Maximize the value, scalability, and flexibility of your data with EverFlex infrastructure, data protection, and storage as a service.

Explore EverFlex XaaSRELATED LINKSBackYour Data Fabric, Your Way.Gain visibility, fuel analytics/data-driven decisions, and speed innovation. Data modernization services and DataOps solutions help you take control of your data, from edge to core to cloud.

Explore Data ModernizationBackEnsure Availability and Integrity of Your Data, No Matter Where It Lives.Protect customer and user experience with solutions to drive agility and availability to meet fast changing needs, prevent downtime, and guard against cyberattacks and other threats.

Explore Data ProtectionBackOptimize Your Data Fabric for Digital Innovation, efficiency, and growth.Drive total data quality, and cut time to insight from weeks to hours, with Lumada DataOps. Democratize access, simplify management, reduce costs, automate scalability, and more.

Explore Intelligent DataOpsBackManage and Optimize the Value of Critical Data Across Cloud Environments.Manage data across cloud environments with DataOps. Enable governance and compliance, reduce risk, and leverage tribal knowledge to drive better decisions, insight, and competitive advantage.

Explore Cloud Data ManagementBackBuild Data Lakes and Warehouses that Enable Actionable Insight and Value.Build data lakes and warehouses and make data actionable using DataOps for superior onboarding, cost optimization, protection, and discovery, using accurate, relevant data.

Visit Data Lakes & WarehousesBackAutomate Integration at Scale to Create a Modern Data Pipeline and Value.Deliver automated, agile data workflows, from edge to multicloud environments, regardless of data volume, variety, or velocity.

Explore Data IntegrationBackSpeed Analytic Advantage with Proven AI Expertise, Solutions, and Frameworks.Jump-start your AI-driven enterprise with advanced analytics and trusted solutions for achieving proven business outcomes.

Explore AI-Driven SolutionsRELATED LINKSBackModernize Your Applications, Your Way.Build, modernize, and manage critical apps across the enterprise and ecosystem with agility, while driving innovation and reducing TCO.

See Application ModernizationRELATED LINKSBackYour Applications. Always-on. Reliable. Cost-Effective.Comprehensive services to optimize resilience and cost for always-on business. Design, build, run, and operate workloads across private, public, hybrid, and multicloud environments.

Visit App Reliability CentersBackMigrate to cloud. Modernize Your Apps.Plan and migrate to cloud, and build cloud-ready applications while ensuring business agility and elastic scalability.

Explore Cloud ModernizationRELATED LINKSBackModernize Product Development Using Next-Gen Technologies and Experience.Stay ahead by building a business-centric, customized application portfolio with next-gen technologies, using modern engineering principles, automation, and expertise.

Explore Digital EngineeringBackFast-Track Innovation in SAP Environments, Unleashing Agility and Growth.Maximize value from SAP ERP applications with our expertise and support. Leverage data to drive insights and create innovative solutions to your toughest business challenges.

Explore SAP ERP ModernizationBackExpert People and Processes to Drive Innovation in Oracle ERP Environments.Optimize your Oracle applications with our expert support. Improve agility, performance, and results through better business decisions and processes.

Visit Oracle ERP ModernizationRELATED LINKSBackYour Digital Future, Delivered Today.Digital transformation is accelerating across all industries, driving the need for greater innovation, agility and resilience. Get there faster with data-driven industrial operations.

Visit IoT Solutions OverviewBackEnhance Efficiency, Safety, and Experience Using Smart Spaces.Smart spaces are emerging everywhere. Get started creating yours, leveraging insights from video, Lidar, and IoT to create smart spaces that are healthier, safer, more sustainable, and more.

Explore Smart SpacesBackAccelerate Industrial Digitalization with Lumada Manufacturing Insights.Create competitive advantage via data-driven insight, automation, and processes. Address production challenges, enable visibility, improve loyalty with predictive models, and more.

Explore Manufacturing InsightsBackReduce Risk and Extend Life Cycles with Lumada Inspection Insights.Leverage visual intelligence solutions to automate your infrastructure and asset inspection processes to reduce risk, improve public safety, and extend life cycles.

Explore Inspection InsightsBackOptimize Asset Health, Performance, and Value with Lumada APM.Deploy data-driven asset health and performance insights to keep your assets delivering optimum performance, safety, reliability, and value with Lumada Asset Performance Management.

Explore Lumada APMBackEmpower Mobile Users to Execute at Scale with Lumada FSM.Lumada Field Services Management is a scalable, intuitive inspection, maintenance, and repair solutions that equips mobile users to execute work orders with optimal efficiency.

Explore Lumada FSMBackIndustrial Strength Asset Management and Resource Planning with Lumada EAM.Lumada Enterprise Asset Management enables industrial organizations to optimize outcomes by managing physical assets throughout their life cycle at reduced operating cost and capital investment.

Explore Lumada EAMRELATED LINKSBackSolutions to Accelerate Data-Driven Transformation Across Industries.Transform your business supported by a trusted partner with deep experience in every aspect of data operations, across multiple industries and technologies.

Industry Solutions OverviewRELATED LINKSBackFinancial Services, Your Way.The future belongs to those who capitalize on change. The right partner can help you accelerate digital maturity, create real customer value, and steer your path to success.

Financial Services SolutionsBackAccelerate Digitalization in Your Manufacturing 4.0 Journey.Capitalize on the value of data from across the business ecosystem to enable superior outcomes. Create end-to-end visibility, resilience, and responsiveness to drive industrial digitalization.

Manufacturing SolutionsRELATED LINKSBackOptimize and Transform Business Operations for the Digital Future.Streamline operations and accelerate energy transition to create competitive advantage and reduce risk in an increasingly complex and dynamic business environment.

Energy and Utilities SolutionsBackSimplify and Automate Care Delivery for Better Results and Patient Outcomes.Turn massive data stores and types into opportunities using intelligent automation. Enable better decisions, gain deeper patient insights, improve lives, and enhance your organization.

Healthcare & Life SciencesBackLeverage Data-Driven Insights to Create Superior Digital Retail Outcomes.Integrate data from disparate sources to build a foundation for omnichannel retail success. Reveal unique insights, optimize operations and deliver superior customer experiences.

Retail Industry SolutionsRELATED LINKSBackImprove Public Services and Safety with Data-Driven Solutions for Government.Leverage data to build and support successful, healthy communities and economies using innovative data solutions for national, state, and local government.

Solutions for GovernmentRELATED LINKSBackTurn Challenges into Opportunities in a Rapidly Changing World.Enhance transportation safety, efficiency, and experience, and enable digital innovation and monetization across the passenger travel, transit, freight, and logistics markets.

Solutions for Transportation -

Products

ProductsBack

- Overview

- Converged Infrastructure

- Hyper Converged Infrastructure

- Cloud Foundation

- Cisco Validated Design

- Overview

- Storage Virtualization OS

- AI Operations Management

- Software-Defined Storage (SDS)

- Data Protection & Cyber Resiliency

Back60 years of innovation helps you trust and unlock the value of your dataOur commitment to innovation is why we're consistently ranked as the most reliable and trusted storage platform – and why 88% of the leading global banks trust their data to Hitachi Vantara.

Explore MoreRELATED LINKSBackIsn’t it nice when storage just works?For the last 22 years we offer 100% data availability guaranteed on all VSP models. That’s why 85% of the Fortune 100 trust Hitachi storage.

Explore MoreBackHarness The Power of Unstructured DataUnleash the potential of your unstructured data, our integrated approach accelerates the value of data by combining enhanced cloud data management and accelerated performance to meet modern application demands.

Explore MoreRELATED LINKSBackProven, Trusted and Simplified.Trusted for more than 40 years Hitachi ensures mainframe solutions are helping you get the most out of your data no matter it lives.

Explore MoreBackHarnessing the Power of DataHitachi gives you to power to handle any type of workload challenge. From intelligent storage to automated disaster recovery, we have a simple solution for you.

Explore MoreRELATED LINKSBackThe intelligent Storage OS Platform that powers solutionsThis is the software that provides all the awesome features for our storage platforms. From data replication to virtualization, SVOS delivers it all!

Explore MoreRELATED LINKSBackSmarter Infrastructure with AINow your storage is self-driving with AIOps management, analytics and automation. One management suite for all your storage solution needs.

Explore MoreRELATED LINKSBackFlexibility, Efficiency and Scalability Your Way.Distributed applications need the right storage. VSS has full VSP integration with enterprise support running on prem or in the cloud.

Explore MoreRELATED LINKSBackMore: Choice, Control and Trust.Easily enhance data protection to support business continuity and cyber resilience in ways that work best for your business -- in the cloud and on-prem.

Explore MoreRELATED LINKSBackConnect Your Data, Trust Your Information, Optimize Your BusinessData experienced with an intelligent data management platform driving revenue growth, business optimizations and improved customer experiences.

Learn MoreRELATED LINKSBackUnlock Data Insights, No matter where it livesIntelligent data discovery and transformation improves productivity by revealing insights more quickly to make your business smarter.

Learn MoreRELATED LINKSBackOnboard, Prepare and Activate Data Faster.Rapidly build and deploy data pipelines for end-to-end data integration and analytics at enterprise scale. Integrate data lakes, data warehouses, and devices, and orchestrate data integration flow across all environments.

Explore MoreRELATED LINKSBackFind, Understand and Govern Your DataData intelligence delivered across all structured and unstructured data. A data culture fostered by trusted & actionable data with observability, lineage, quality and reliability.

Learn MoreRELATED LINKSBackIntelligent Data TieringReduce Hadoop infrastructure costs with an intelligent data tiering solution using object storage for seamless application access.

Explore MoreRELATED LINKSBackMeaningful analysis- productive AI and OT/IT data managementIoT application assembly and enablement for enterprise-wide industrial data operations and information fusion. Power reliable and actionable insights for sustainable performance improvements.

Explore MoreRELATED LINKSBackRobust Industrial IoT Core Capabilities at Enterprise ScaleAccelerate and scale operations application deployment with a complete IIoT data platform framework, including core, gateway, digital twins, and machine learning (ML) services.

Explore MoreRELATED LINKSBackAccelerate Delivery Effort for AI and ML ApplicationsToolkits that simplify industrial IoT software solutions delivery with packaged digital twins, machine learning (ML) services, and deployable ML models.

Explore MoreRELATED LINKSBackFlexibility, Simplicity & Scale for Changing Business Demands.XaaS: Your infrastructure, only better. Get the ability to scale as needed, guaranteed SLAs, and pay-as-you-go pricing to align IT costs with your business.

Explore MoreRELATED LINKSBackAgile, cloud-managed storage with guaranteed business outcomes.Fast and flexible cloud consumption subscription service for storage. Enjoy proven performance of pay-as-you-grow storage and agile storage services with a cloud-based self-service management console.

Explore MoreRELATED LINKSBackGain agility, choice and reassurance with consumption-based protection.Level up data protection quickly and easily with cloud-managed pay-as-you-go, OPEX options for hybrid environments.

Explore MoreBackAccelerate Your Infrastructure Time-to-ValueHitachi Vantara Professional Services will help accelerate your digital infrastructure deployment, speeding your benefits and providing you the skills to adapt and optimize IT change.

Explore MoreRELATED LINKSBackIntelligent Cloud Infrastructure for ApplicationsBringing the power of cloud to thousands of customers like you, innovative converged and hyperconverged solutions that increase product release velocity, simplify cloud operations, and reduce TCO.

Explore MoreRELATED LINKSBackTransform Your Core and CloudRedefine enterprise IT with best-in-class performance and availability for your most critical business applications.

Explore MoreRELATED LINKSBackModernize Your Apps and InfrastructureSimplify, scale, and save with next-gen HCI appliances delivering low-latency infrastructure and advanced policy-based automation to modernize IT.

Explore MoreBackHybrid Cloud Made Simple!Increase business agility and automation with a seamless hybrid cloud powered by VMware Cloud Foundation.

Explore MoreRELATED LINKSBackFuture-proof your data infrastructure with Cisco and Hitachi Adaptive SolutionsFlexibility, scale, and performance to make tomorrow's technology work for you today.

Explore MoreRELATED LINKSBackAutomated Image Inferencing and ProcessingAI-driven image-based inspection automates defect detection for equipment assets to lower costs, reduce risks, and enhance worker safety.

Explore MoreRELATED LINKS -

Services

ServicesBackBackYour Applications. Always-on. Reliable. Cost-Effective.

Comprehensive services to optimize resilience and cost for always-on business. Design, build, run, and operate workloads across private, public, hybrid, and multicloud environments.

Visit App Reliability CentersBackMaximize Your Cloud Investment and Align Spend with Business Goals.Transform cloud financial management with complete visibility into cloud usage and costs.

Explore FinOps ServicesRELATED LINKSBackBuild and Scale Cloud, Applications, Data, and IOT Solutions with Confidence.A range of options to help you reinvent, innovate, transform, and scale. Rely on our global network of experts, supported by powerful assets, platforms, and partnerships.

Explore Consulting ServicesRELATED LINKSBackReimagine, Re-engineer, or Reinvent Your Innovation Roadmap.Your digital transformation journey starts here with experts ready to help you drive innovation, superior business outcomes, and value.

See Digital Strategy ServicesBackDeliver Your Applications, Your Way, with Proven Experts, Tools, and Processes.Rearchitect core applications to optimize resilience and scalability, and drive superior experiences and innovation from the ground-up.

Learn About App ModernizationRELATED LINKSBackAchieve Clarity with Any Cloud and Every Cloud. It’s Cloud Migration, Done Right.Fast track cloud transformation by moving applications and workloads using our end-to-end cloud modernization services.

Explore Cloud ModernizationRELATED LINKSBackDeliver Superior Experiences for Competitive Advantage.Build exceptional real-time, data-driven experiences to drive customer and brand engagement while improving growth and business value.

Visit Digital Experience Services.RELATED LINKSBackExpertise, Tools, and Processes to Build a Modern Data-Driven Organization.Engage experts to create a modern data fabric, institute governance and DataOps, improve decision making, and optimize the value of enterprise data.

Learn About Data ModernizationRELATED LINKSBackBuild AI-Driven Solutions and Outcomes, to Grow Your Business, Your Way.The experience, analytics, frameworks, and organizational knowledge to extract superior insight and value from your data, fueling competitive advantage and business growth.

Explore AI & Insights ServicesRELATED LINKSBackFast-Track Innovation in SAP Environments, Unleashing Agility and Growth.Maximize value from SAP ERP applications with our expertise and support. Leverage data to drive insights and create innovative solutions to your toughest business challenges.

Explore SAP ERP ModernizationBackExpert People and Processes to Drive Innovation in Oracle ERP Environments.Optimize your Oracle applications with our expert support. Improve agility, performance, and results through better business decisions and processes.

Visit Oracle ERP ModernizationRELATED LINKSBackDeep IT and OT Expertise, Solutions and Services for Superior Industry OutcomesDigitize your physical operations and assets to achieve data-driven modernization and achieve next-level business outcomes and ROI through industry-tested consulting services.

Learn More About IoT ServicesRELATED LINKSBackScale quickly with Managed Cloud, Application, Data, and Infrastructure Services.Streamline IT operations, enhance performance and agility, and drive growth with managed services for application and infrastructure management.

Explore Managed ServicesBackMaximize Performance and Value from Datacenter to Cloud.Accelerate depolyments and migration for improved operational efficiency and reduced TCO, with a range of Edge to Cloud Infrastructure Services.

Explore Edge to Cloud ServicesBackSpeed Time to Market and Realize Better Outcomes.Improve competitive advantage and control of your infrastructure and service levels with expert strategy, design, and operational services.

Visit Infrastructure ServicesBackOptimize Value and Benefits of Your Storage Investments.Deliver superior uptime while reducing risk with a range of data migration, storage implementation, data protection, and workflow automation services .

Explore Services for StorageBackSimplify Management of Complex Kubernetes Environments.Simple, seamless deployment and control of your complex private, hybrid, and multicloud Kubernetes environments and associated enterprise application ecosystems.

Learn About Kubernetes ServiceBackKeep Your Business Running at Peak Performance.Free your own people to focus on driving innovation and growth with a variety of service levels and options to proactively prevent issues or disruptions to your business.

See Customer Support ServicesBackSpeed Timelines, Plan for Innovation, and Reduce Risk.Single point of contact and VIP support for ongoing guidance, best practices, and managing feature requests. Ideal for complex data management, integration, IoT, and analytics initiatives.

Lumada & Pentaho Support ServicesBackTechnical Expertise to Meet Your Unique Requirements.Flexible, expert tiered service portfolio designed to help you save time, control costs, and accelerate responses to your unique business and technical requirements and challenges

Visit Preferred Customer ServicesBackExpert Training to Give You an Edge Over the Competition.A variety of options for every step in your learning journey. Get up-to-speed fast, become a product or solution expert and get the most out of your valuable investment.

Learn More About TrainingBackEnhance and validate your skills, knowledge and value.Comprehensive, two-tiered program to build your expertise and advance your goals and career. Track your progress and gain recognition through professional certifications and digital badges.

Learn More About Certification - Newsroom

-

Partners

BackBackJoin Our Program

Grow your business with our simple and profitable Partner Program. Our partner-centric approach means we're here to help you succeed.

Your Next OpportunityRELATED LINKSBackCo-created Solutions with Technology InnovatorsBenefit from solutions built with top technology and cloud partners that accelerate time to value and reduce risk.

Meet our Technology AlliancesBackCost Effective and Flexible Cloud and Managed ServicesTransform your business with Hybrid Cloud Services from our ecosystem of Cloud Service Providers with as-a-Service solutions that are flexible, predictable, secure and efficient.

Meet our Cloud Service ProvidersBackDigital Transformation for Business and ITAccelerate your digital journey and gain competitive advantage with business and IT services that transform your business.

Meet our Global System IntegratorsRELATED LINKSBackOutcome-centric Service DeliveryMaximize the value of your technology investments with services that deliver outcomes for your business.

Meet our Service Delivery PartnersRELATED LINKSBackExperts To Help You SucceedFind Hitachi Vantara authorized partners who are qualified to help meet your unique business needs.

Find an Expert PartnerBackTools and Resources to Accelerate OpportunitiesDevelop new opportunities and accelerate your sales with configuration tools, demos, training, incentives and more.

Login NowRELATED LINKS -

Company

CompanyHitachi Vantara - For the Data-Driven

Hitachi Vantara, a wholly-owned subsidiary of Hitachi Ltd., delivers the intelligent data platforms, infrastructure systems, and digital expertise that supports more than 80% of the fortune 100. Learn how Hitachi Vantara turns businesses from data-rich to data-driven through agile digital processes, products, and experiences.

Explore CompanyBackHitachi Vantara - For the Data-DrivenHitachi Vantara, a wholly-owned subsidiary of Hitachi Ltd., delivers the intelligent data platforms, infrastructure systems, and digital expertise that supports more than 80% of the fortune 100. Learn how Hitachi Vantara turns businesses from data-rich to data-driven through agile digital processes, products, and experiences.

Explore Company - Contact Us

-

United States

AMERICASASIA PACIFICEUROPE, MIDDLE EAST AND AFRICAEUROPE, MIDDLE EAST AND AFRICA

Follow this link to your desired page on Hitachi Digital Services or remain on Hitachi Vantara

![]() Thank you. We

will contact you shortly.

Thank you. We

will contact you shortly.

Note: Since you opted to receive updates about solutions and news from us, you will receive an email shortly where you need to confirm your data via clicking on the link. Only after positive confirmation you are registered with us.

If you are already subscribed with us you will not receive any email from us where you need to confirm your data.

Explore more about us:

To Talk to a Representative,

Call 1.678.403.3035

You’re in the Right Place!

Hitachi Data Systems, Pentaho and Hitachi Insight Group have merged into one company: Hitachi Vantara.

The result? More data-driven solutions and innovation from the partner you can trust.

You’re in the Right Place!

REAN Cloud is now a part of Hitachi Vantara.

The result? Robust data-driven solutions and innovation, with

industry-leading expertise in cloud migration and modernization.

You’re in the Right Place!

Waterline Data is now Lumada Data Catalog, provided by Hitachi Vantara. Lumada Data Catalog, available stand-alone, is now part of the Lumada Data Services portfolio.

This site uses cookies from Hitachi and third parties for our own business purposes and to personalize your experience. By using this site, you agree to the use of cookies. For more information, visit Hitachi Cookies Policy.